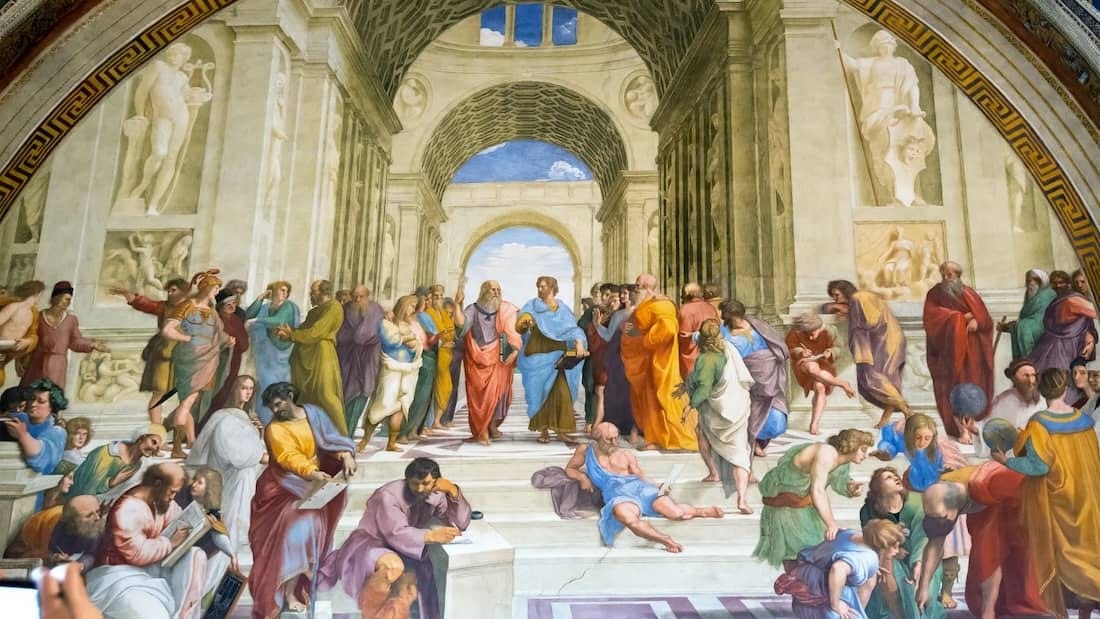

Over the last two or three decades, something happened to humanistic education that nobody really planned and nobody really noticed until it was already done. Responding to economic pressure and the anxieties of students carrying enormous debt, universities gradually defunded the liberal arts in favor of what we politely called “practical” education: STEM, business, pre-professional programs, these were the future. Majoring in philosophy or literature started to feel like a luxury, the intellectual equivalent of ordering dessert when you can’t afford dinner. The market had spoken, and the market had no patience for Montaigne.

But here is the thing about demand: it doesn’t disappear just because the institution stops supplying it. The hunger for humanistic thought and serious engagement with history, literature, ethics, aesthetics, and the full range of human experience didn’t go away. It migrated. It moved to the internet, where it found new and surprisingly passionate homes: YouTube video essays, Substack newsletters, long-form podcasts, sprawling Twitter threads where scholars and amateurs argued about historiography at midnight. Some of this content is genuinely excellent. You can find more rigorous engagement with certain philosophical questions in a well done three-hour podcast than in many undergraduate seminars. The appetite, it turns out, was never the problem.

The problem is the format.

What a classroom does — even a mediocre one — that the internet structurally cannot do is force productive friction. You cannot scroll past the argument you find uncomfortable in a classroom. You cannot close the tab when the professor asks you to defend your interpretation. You cannot anonymously share your opinions. Someone makes a claim, and you have to find words for what you actually think, not what performs best in front of an audience. That process of articulation under pressure, of being required to sit with difficulty rather than click away from it, is where most of the actual intellectual work of the humanities happens. The insights don’t necessarily come from reading the text. They come from what happens when you try to explain it to someone who disagrees with you.

Online discourse has precisely inverted this. The online incentive structure rewards speed, confidence, blank sarcasm, and tribal signaling. The most engaging response is rarely the most accurate one; it’s the one that makes your side feel validated and the other side look foolish. This isn’t a character flaw inherent to internet users, but, I think, a natural byproduct of an online environment. Twitter and its successors are architecturally optimized for performance, not comprehension.

This matters for something larger than education. The humanities, when taught well, train a specific and rather rare cognitive skill: the ability to genuinely inhabit a viewpoint that is not your own before you evaluate it. Not to pretend to consider it, or perform open-mindedness, but to actually understand it from the inside, to feel the force of the argument, to see why a reasonable person might hold it, to find the strongest version of the position rather than the weakest. This is what close reading is, what historical empathy is, what serious moral philosophy demands. It is not a soft skill. It is a rigorous practice that takes years to develop, and it is precisely the practice that social media most consistently and reliably destroys.

It is perhaps not a coincidence, then, that our retreat from the humanities in formal education and our migration of humanistic discourse to hostile online environments happened in the same decades that produced our current levels of political polarization. I don’t want to oversimplify the causal story because polarization has many parents. But I do think we quietly dismantled the institutional training ground for the cognitive habits that make good-faith disagreement possible, and at the same time replaced it with an environment that punishes those habits and rewards their opposites.

The reassuring part is that AI, among other factors, may be forcing a correction that market logic previously prevented. If the skills that are hardest to automate are precisely the ones the humanities cultivate, such as judgment, moral reasoning, the ability to navigate genuine ambiguity, to communicate across difference, and to understand what motivates other people, then the economic case for a humanistic education may reassert itself in ways that the last few decades foreclosed.

That is, in a sense, what the humanities were always supposed to do. We just forgot, for a while, why that mattered.

The format is not incidental to the outcome; it is the outcome. Or, as Marshall McLuhan said, the medium is the message. Saving the humanities means recovering the institutions and structures — classrooms, seminars, the productive discomfort of being genuinely challenged — that make it possible. Not because the internet lacks good content. It has extraordinary content. (It also has unremarkable slop.) But content without scaffolding is not education. It is entertainment (infotainment?) dressed up in the vocabulary of thought. And maybe we have enough of that. College seminars are not comment sections.