The Journal of Writing Assessment

My quasi-experimental study, The Effects of Automated Writing Evaluation Technology on Improving Student Writing, has been published by The Journal of Writing Assessment.

My “Academic Minute”

On 15 February 2024 I was featured on NPR’s The Academic Minute in which I discussed the difference between human and artificial intelligence. More poetic than technical, my essay attempts to distinguish between the reasoning done by humans with words and the number crunching done by artificially intelligent computer programs with vectors and probability distributions. I suggest that scientific knowledge is already somewhat artificial, insofar that it is a model of reality and is limited to describing its findings in two negative modes: rejecting, or failing to reject, the null hypothesis. We can still know many things using only those two modes, but human reasoning and experience can ponder where science can’t. I believe there’s a similar dynamic with the “knowledge” or “intelligence” of AI, which arrives at the right answer through a process of elimination. You can infer the right answer that way, but it’s not the same as filling in the blank.

Survey of AI Anxiety Among Educators

In late November 2023, I published a summary of the results of a survey I conducted on AI anxiety among educators in The Conversation. My primary point is that feelings and anxieties about AI and education are complicated and multifaceted. I think fear and anxiety about how AI will impact education are legitimate; I also don’t think this means the end of education. At least for the short term.

Generative AI and its Impact on Higher Ed

I have spent much of my first year on the tenure track writing and researching about Large Language Models (LLMs) and generative AI, as these are technologies closely related to my dissertation research on automated writing evaluation (AWE). For the Dallas Morning News, I wrote an op-ed on how I think ChatGPT represents an opportunity, rather than a crisis, for higher education. I also gave an interview to The Texas Standard on how and why educators should work with rather than against generative AI in the writing classroom.

Automated Writing Evaluation

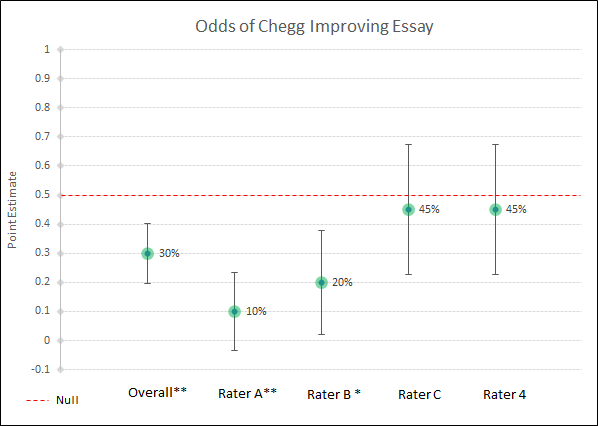

Adapted from chapter three of my dissertation, my article, “The Effects of Automated Writing Evaluation on Improving Student Writing,” is currently under review. The article describes an exploratory quasi-experiment that tests how much a popular automated writing evaluation (AWE) program improves student writing in the eyes of composition instructors. Four instructors were given 20 pairs of papers each, with one paper per pair treated by the AWE program. Instructors were then asked to read and select the better of each pair, and the overall point estimate was calculated to determine the probability of designating an AWE-treated essay as “better.” I found that the treated essays were selected as better only 30% of the time (95% CI, 20-40%), a statistically significant difference from the null hypothesis of 50%. This tentatively suggests the AWE program fails to improve student writing. Implications are discussed.

Gamification

My chapter, “Beware the Button Masher: Gamified Learning and the Need for Careful Assessment,” appears in the peer-reviewed, edited collection, Gamification in the RhetComp Curriculum, published by Vernon Press. In it, I consider questions of educational assessment as they relate to gamified pedagogy and how attention to validity and reliability is crucial for gamification to succeed as an educational intervention. In particular, I explore three areas of concern related to the creation of fair and valid assessment instruments: 1) student attitude selection effects of games-based assignments and its potential influence on extrinsic motivation; 2) the frequency and type of in-game assessment prompts that stimulate metacognitive reflection while maintaining a gamified environment; 3) the promotion of inter versus intra-competition in games and the regulation of competition to maximize student learning gains.

Dissertation

The Android English Teacher: Writing Education in the Age of Automation

Supported by a summer-long grant from the Purdue Research Foundation, I conducted a small-n quasi-experiment to test the effects of automated writing evaluation (AWE) technology on improving student writing. Popular online college tutoring site Chegg.com claims that its EasyBib Plus AWE tool can improve both writing and writers. Such claims represent a shift in focus from summative to formative assessment abilities and raise questions about the future of in-person education. Drawing on the history of writing assessment and the results of the experiment, ultimately I argue against using AWE for formative writing instruction. In an era of growing automation, I maintain that a human-centered pedagogy remains one of the most durable, important, effective, and transformative ingredients of a quality education.

Writing Assessment

In 2018, I was appointed as the Assessment Research Coordinator for a program-wide evaluation of Purdue’s composition program. In response to political pressures, the department sought to collect empirical data that demonstrated we were meeting our learning outcomes. I led a team in the piloting of six common assignments designed to measure different aspects of our program outcomes. This project required me to construct essay assignments and assessment instruments, conduct rating and norming sessions, and statistically analyze and visualize data, both quantitative and qualitative. This year-and-a-half long project provided me ample experience in writing program administration and institutional assessment. I wrote about my findings and recommendations in a published report that was distributed across the College of Liberal Arts.