A perennial debate among teachers seasoned and new concerns how much lecture versus discussion to feature in class. The debate is especially relevant to teachers of liberal arts subjects, since the content of such courses is not always conducive to rote learning techniques. Liberal arts subjects often require the completion of previously assigned reading, and even if enough students read to engage in fruitful discussion there remains the risk of devolving into debate rather than dialogue. To complicate matters further, lectures and class discussions are frequently, and falsely, pitted against one another, viewed as binary opposites and sometimes filtered through a political lens which codes the former as conservative and the latter as progressive. Consider the image of a professor lecturing to a class of students dutifully taking notes versus a classroom of circled desks with students and teacher alike engaging in a dynamic conversation.

I believe there are more or less appropriate times and subjects for either lectures or discussions, and I don’t buy that either has a particularly salient political character; after all, even the teacher discussing texts in a circle still assigns grades at the end of the term. But there are challenges to both lectures and class discussions that I think frustrate new teachers in particular. Lectures aren’t especially engaging unless done well, which comes with time and practice. On the other hand, discussions can intimidate students to the point of non-participation, especially if the topic of discussion is particularly controversial. Also, the skill of facilitating and nourishing discussions is underrated and quite challenging, another pedagogical virtue that comes with time and practice. Additionally, I find neither mode particularly effective for beginning a class. Opening with a lecture (especially in the early morning) risks students nodding off to sleep, as their engagement with lectured material is entirely determined by their individual commitment. Conversely, jumping into a discussion right away often fails to take off, as any teacher can attest, since students can be reluctant to break the ice.

Enter the hypothetical. The hypothetical is exactly as it sounds: a contrived example or problem related to the day’s concepts is posed to students who must reason through its various frictions. Incorporating hypotheticals into liberal arts classes is particularly effective, as it occupies a middle ground between lecture and discussion, combines the best elements of both, and requires no prior reading. Like a lecture, a hypothetical allows the instructor discretion in guiding students more or less forcefully towards the concepts intended for learning. And like a discussion, it engages students’ creative and critical thinking muscles, prompting them to respond and participate. Hypotheticals are a great icebreaker to boot; completing the reading is not required to participate in the deliberation of the hypothetical, as its contrived parameters present enough content for participation. It also doesn’t risk intimidating students from offering controversial opinions since its hypothetical nature provides a comfortable space for intellectual exploration.

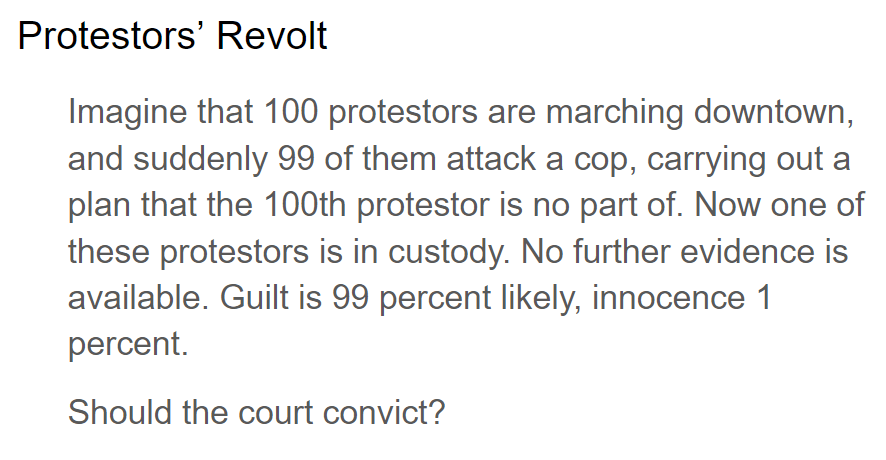

Here is one hypothetical I offer students in one of my first-year composition courses:

Here’s what typically happens when I pose it. The majority of students almost immediately answer “no,” which allows me to oppose the class consensus to encourage deeper thinking. For instance, I point out that only a 1% chance of a wrongful conviction is quite good, especially considering current estimates put the US wrongful conviction rate somewhere between 2-10%. I then ask which kind of evidence would persuade them to convict, to which they usually respond “eyewitness testimony,” “DNA evidence,” and “video footage.” All of which, I suggest, is likely less than 99% accurate. So what gives, I press.

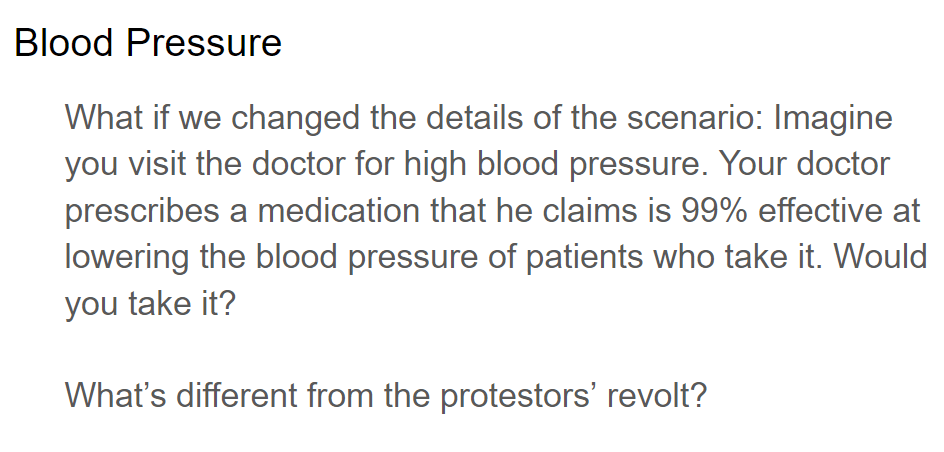

I then switch to another hypothetical, this time involving blood pressure medication, to which the entire class always answers “yes.” (As a side note, a great technique is to juxtapose two structurally-similar hypotheticals that nevertheless induce students to opposite conclusions; the cognitive dissonance is generative.)

“So what’s the difference?” I ask. Students usually reason many of the differences pretty well, in my experience: The stakes are different for sending an innocent person to prison compared to leaving blood pressure untreated; the former involves deciding someone else’s fate and the latter your own; and there’s a matter of “trust” when it comes to doctors that feels distinct from the consequentialism of the judicial system.

The larger point I make is this: The American judicial system (in theory, at least) does not consider the probability of committing a crime as admissible evidence, knowledge of guilt. (Thank God Minority Report is just a movie.) Even if there’s only a 1% chance, you are presumed innocent until proven guilty. In American medical science, however, the probability of a drug’s effectiveness, inferred from carefully controlled experimental trials, is considered knowledge. In fact, inferring the effectiveness of medical treatments from samples is the only way to know anything in medicine at all; it is impossible to “witness” or testify to, at least rigorously and systematically, the evidence of medical efficacy. These hypotheticals therefore elegantly demonstrate the difference between two types of information: observational knowledge, obtained and verified through experience, and probabilistic belief, inferred through studies of manipulated samples.

In the larger context of the course, we are discussing the nature of knowledge, how it is we can say we know something. The goal of this class period is to demonstrate how knowledge is context-dependent; what counts as knowledge in a medical trial is not what counts as knowledge in a court of law. Does that mean knowledge is whatever we say it is? No, it means that certain domains (medicine, law, science) have developed their own rules that govern how knowledge in that domain is understood and counted. Awareness that there are differences in how we derive knowledge is a concept I discuss in my first-year composition courses because I believe the idea is essential to understand before reading and writing academic research at the college level (your mileage may vary). This hypothetical helps students to intuit the primary takeaway of the lesson, the constructed nature of knowledge, without me lecturing at them about it.